Written by Joe Winchester, Member of the Zowe Community, Open Mainframe Project Ambassador and Senior Technical Lead at IBM

The Open Mainframe Project’s Zowe community is super excited to announce its 1.23 software release is now available. The Zowe community puts out new software every six weeks, and after each release a live system demo where the squads showcase the new content is given and the calendar invite for these can be found on the OMP calendar. You can read about some of the main highlights of new and update contents for 1.23 below or watch the system demo video that was recorded on August 4.

Zowe vNext

Zowe is currently in its version 1 long term support active phase, during which APIs don’t change to ensure that extensions built against the v1 API and its associated conformance program remain functional when the base code is upgraded. In preparation for version 2 the zowe.org/download page now includes early drops of the code for the Zowe Explorer, as well as the Zowe Command Line Interface (CLI) and the Zowe Client SDK for Node.js that underpins both of these components. As well as code drops, the site also includes links to documentation around the new vNext features, for CLI, Node.js Client SDK, and Zowe Explorer.

Because vNext isn’t yet hardened in terms of final APIs or functionality, please try it out and give the squad any feedback for things you like or don’t like, either in github.com/zowe issues or else in the CLI or Zowe Explorer slack channels.

Team Configuration

One of the big ticket items for vNext is support for team configuration files to control profiles, and for customers who have to manage Zowe Explorer or CLI on many desktops and want a global central way to share system information this is a great upcoming feature. Currently endpoint information is stored in one file at a time in a user’s home directory, e.g. ~/.zowe/profiles/zosmf/test_system.yaml , or ~/.zowe/profiles/zosmf/production_system.yaml. If you have a lot of profiles and need to update shared information this can be problematic (although baseProfile helps to mitigate this by centralizing common information such as –host, –port, and other configuration detail. What if you’re working in a team however where all of the common information around host, port, certificate trust, and details that are common across users need to be changed across everyone’s PC in their individual home directory ? This is where team configuration comes in with a zowe.config.json file. This can sit in a github repo and be checked out by team members who locally store any secure properties (such as their credentials) yet inherit all of the share information that is unchanged between team members. A very nice feature to assist with setup is that if the z/OS API Mediation Layer is installed and the services you wish to connect CLI profiles against are registered to the gateway, then you can auto configure from the bottom up using zowe configure auto-init. This helps scaling up collaboration between team members using the Zowe CLI, node SDK or Zowe Explorer. Vendor extensions that have Zowe CLI and Zowe API Mediation Layer conformance badges can benefit from this by providing the serviceID of their API service in their CLI plugin.

Secure Credentials Store

For folks who have used the CLI plugin secure credentials store @zowe/secure-credential-store-for-zowe-cli that allows you to store profile information such as passwords or authentication tokens securely on your laptop (i.e. not in clear text files), this no longer needs to be added post install on top of CLI core, and is now included with the base.

CLI Deamon

vNext also includes the CLI Deamon mode which aids performance that you can read about in this great medium.com/zowe blog post. The deamon is a long running process started using start zowe –daemon after which you can use zowex in place of zowe for all CLI commands and have them routed to the deamon process, which because it’s already active avoids the prolog and epilog node steps and their associated CPU time surrounding every command. If you are using v1 LTS of Zowe CLI you can get the zowex daemon from github downloading zowex.tgz for your operating system.

Authentication based server side load balancing

In the current program increment the APIML squad are working to provide deterministic load balancing capability in the API gateway. The API gateway is a reverse proxy server that listens on a single port inbetween client API requests and server(s) that can respond to that request, forwarding the request to the server that is best able to service the API.

To illustrate load balancing and explicit client routing, consider the scenario where there are three instances of a service. In a traditional round robin model if a client sends 6 concurrent API requests to the gateway, they will go to server instances 1, 2, 3, 1, 2, 3. What if instead the client doesn’t want this and would prefer all 6 requests to go to the same server ? This could be for a number of reasons, perhaps the conversation isn’t truly stateless and for APIs 2 through 6 to work correctly they must go to the same instance that processed requests 1. This is known as “sticky sessions” and might be because the server is using a TSO session or other mainframe service that is allocating z/OS resources for a single session. Another scenario might be for performance optimization where having all 6 requests use a single server instance is more efficient for speed, memory, or other compute resources than having the requests processed by 3 different instances.

Header based load balancing

To enable this feature set the instance.env value APIML_ROUTING_INSTANCEIDHEARDER=true. This triggers the API Gateway to return the response header X-instanceId whose value will be the ID of the actual server the request was routed to. The header data matches the instanceId that can be queried on the /services API and is shown in the Eureka UI for the discovery client. What this results in is every API response now includes a header such as X-InstanceId. =abc.service.id:1001 or X-InstanceId. =abc.service.id:1002 and so forth, depending on which instance handled the actual routed request. Clients than then target a particular server by adding X-InstanceId. =abc.service.id:1001 to their request header. To allow this to occur the registered service must have specified “apiml.lb.type” : “headerRequest”.

Authentication Load Balancing

To enable this feature set the instance.env value APIML_LOADBALANCER_DISTRIBUTE=true. When the client authenticates to the API Gateway the gateway-service cookie now includes an attribute Domain=<URL>. This is the host of the gateway and when passed on the header to the gateway with the matching domain, means that the serviceID is sticky towards the one used when the user first logged on (i.e. created their authentication token that is also stored in the gateway-service cookie. To enable a service to participate in this kind of load balancing, where client requests are always send to the service that did the login, then the registered service must specify “apiml.lb.type” : “authentication”. The default length of time for a sticky authentication server having all requests routed is 8 hours although this can be configured up (or down). Resiliency is provided by the APIML Caching Service which allows it to work in a highly available setup where there is more than one gateway in a distributed sysplex environment.

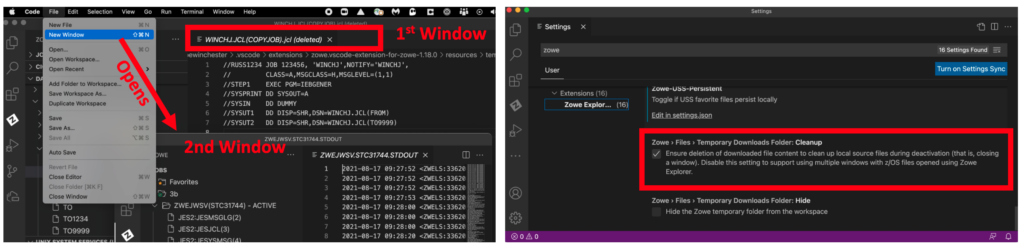

Zowe Explorer

In the Zowe Explorer if you open a new window and then close the second window down, it previously would always clear the folder containing the temporary local files used by VS Code (that are local shadows of the z/OS data set members or USS files), meaning they would appear as (deleted) in the primary window when the second window was closed, meaning you couldn’t then save your work. To prevent this behavior a new user setting Zowe > Files > Temporary Downloads Folder: Cleanup can be set to false.

The Zowe Explorer now support multi-select delete of more than one PDS member. The progress of the deletions are shown in the status pop-up and a mix of PDS members from different data sets as well as sequential data sets and a PDS itself can all be selected for deletion together. If there are any errors during the deletion then a single error will be displayed for each deletion failure that occurred, and a single information pop-up is shown when the delete requests have been finished.

Zowe Desktop

To allow features such as positioning the File Editor on the ~ directory for the TSO user accessing the desktop, the ZWESVUSR must have READ access to the IRR.RADMIN.LISTUSER which grants it permission to obtain information about the OMVS segment of the user profile through the LISTUSER TSO command. This is a simplification on the previous setup where the TSO user themselves needed to access the ESM. Full details on all of the permissions needed for ZWESVUSR are in the System Requirements chapter of the Zowe documentation which is also now included in the ZWESECUR setup JCL to prepare a z/OS environment for Zowe.

For high availability the Zowe Desktop can now register itself to multiple discovery servers, which helps for the scenario when one of them goes down or becomes unavailable. Previously the Zowe Desktop server only registered itself with a single discovery server. This is done with the parameter ZWE_DISCOVERY_SERVICES_LIST in instance.env that is a colon separated list of all of the available discovery servers in the HA environment.

To support High Availability (HA) scenarios the desktop now has to deal with requests coming from different hosts, so the cookies are now marked as SameSite=Strict which is the highest level available to guard against any kind of cross site forgery (CSF) attack and yet allow the Zowe Desktop server to faithfully honor genuine requests. Not all older browsers support cookies that are SameSite=Strict, so the recommendation is always to update client browsers to the latest releases to keep up with security fixes and enhancements in both clients and servers.

HA scenarios involve multiple distributed instances of Zowe’s server side components that can act interchangeably with each other. To achieve this state needs to be shared which is the job of the caching service. To allow plugins to opt out of HA (for example they don’t wish to share data via the caching service or perhaps aren’t written to use it yet) there is now an explicit way that plugins can opt out of being HA ready. When this occurs if the Zowe Desktop is starting up in HA mode it will not load plugins that haven’t declared themselves as HA ready, which will avoid any unpredictable runtime behavior.

The File Editor has been enhanced so the mouse cursor state is saved tab to tab. This is great in the scenario where you have opened more than one data set member or USS file and are editing one file and perhaps open another to find information to copy, and now when you go back to the second file it will have remembered the position making copy/paste and general editing more usable. Another feature demo’d was the ability to download a USS file form the right mouse menu which is great to doing file transfer from mainframe to PC. This feature didn’t make the 1.23 drop but will be in 1.24 and is available in staging builds for folks who are interested.

The Open Mainframe Project’s Zowe squads would love to hear your input and thoughts and feedback on everything and anything related to the content, not just of the 1.23 release, but of the product in general. What is missing ? What do you find difficult ? What area of mainframe modernization and extensibility are we missing ? Please get in touch via slack or github.com/zowe issues.